Snapchat Announces AR Support for Apple's Iphone12

This week, Apple announced the launch of its new iPhone 12, which, among other advances, includes a new LiDAR scanner, which will improve digital mapping of real-world scenes. LiDAR, which stands for 'light detection and ranging', measures how long it takes for light to reach an object and reflect back. In practical terms, this enables greater depth-mapping by establishing a better understanding of objects within a frame, and will facilitate the creation of AR tools that can better interact and respond to real-world cues and objects.

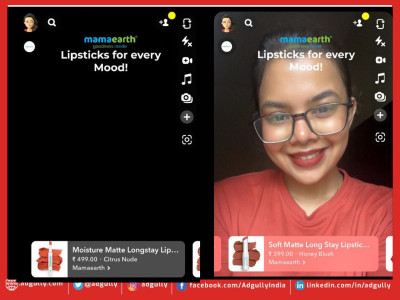

The Lens Studio 3.2 update adds new search bars, making it easier for developers to find specific templates or Material Editor Nodes. Snapchat has also extended capabilities with its Face Mesh and Hand Tracking features to allow for hand segmentation and tracking against an entire skull as compared to just a face. Features include:

- Head Mesh – Includes a new ‘skull’ property within the Face Mesh asset, which allows for the tracking of a user’s whole head shape;

- Hand Segmentation – Segment an image against a hand, as well as occlude things behind hands. Hand segmentation uses 2D space (Screen Space) to apply a segmentation effect to a user’s hands, allowing for hand gestures to control effects;

- Behavior Script – Used to set up different effects and interactions through a dropdown menu. Developers can also use the ‘Behavior Helper Script’ to choose different triggers like face or touch interactions, and respond to them with effects such as enabling objects.

Share

Facebook

YouTube

Tweet

Twitter

LinkedIn